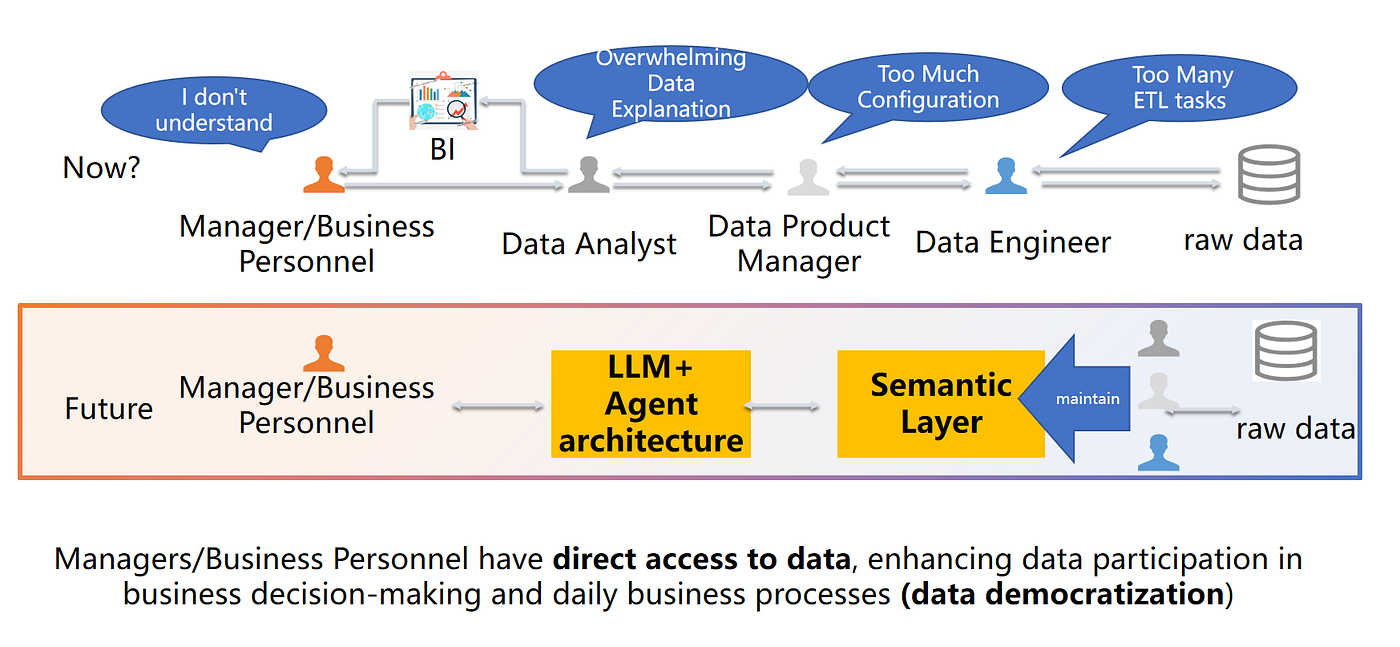

Enterprise data teams aren’t short on tools — they’re short on *accessibility that stays correct*.

Most organizations still have a familiar split:

- A small group of analysts can explore data confidently

- Everyone else waits in a queue for answers, dashboards, or exports

Agentic Data Analytics is a practical path out of that bottleneck, but only if it’s built on the right foundation.

This article explains the core idea and the architecture that makes it reliable: agent architecture + a semantic layer.

Introduction: The Data Accessibility Crisis

Companies collect more data than ever, yet most employees can’t use it day-to-day.

The common failure mode isn’t “people don’t care.” It’s this workflow:

- A manager asks a question

- The question bounces through tools, dashboards, analysts, and engineering

- The definition of the metric changes mid-thread

- The answer arrives too late to matter

Agentic Data Analytics targets the architecture behind that pain: it reduces handoffs while keeping business meaning and governance intact.

What Is Agentic Data Analysis?

Agentic Data Analysis is not “a chatbot that writes SQL.”

It’s an AI system that can plan, execute, validate, and iterate through multi-step analysis while staying grounded in your organization’s definitions.

At a high level, an agentic analyst should be able to:

- Understand business intent (not just query syntax)

- Break complex questions into smaller analytical tasks

- Use governed business definitions (metrics, dimensions, rules)

- Validate results and handle edge cases

- Carry context across follow-ups (“break that down by region”)

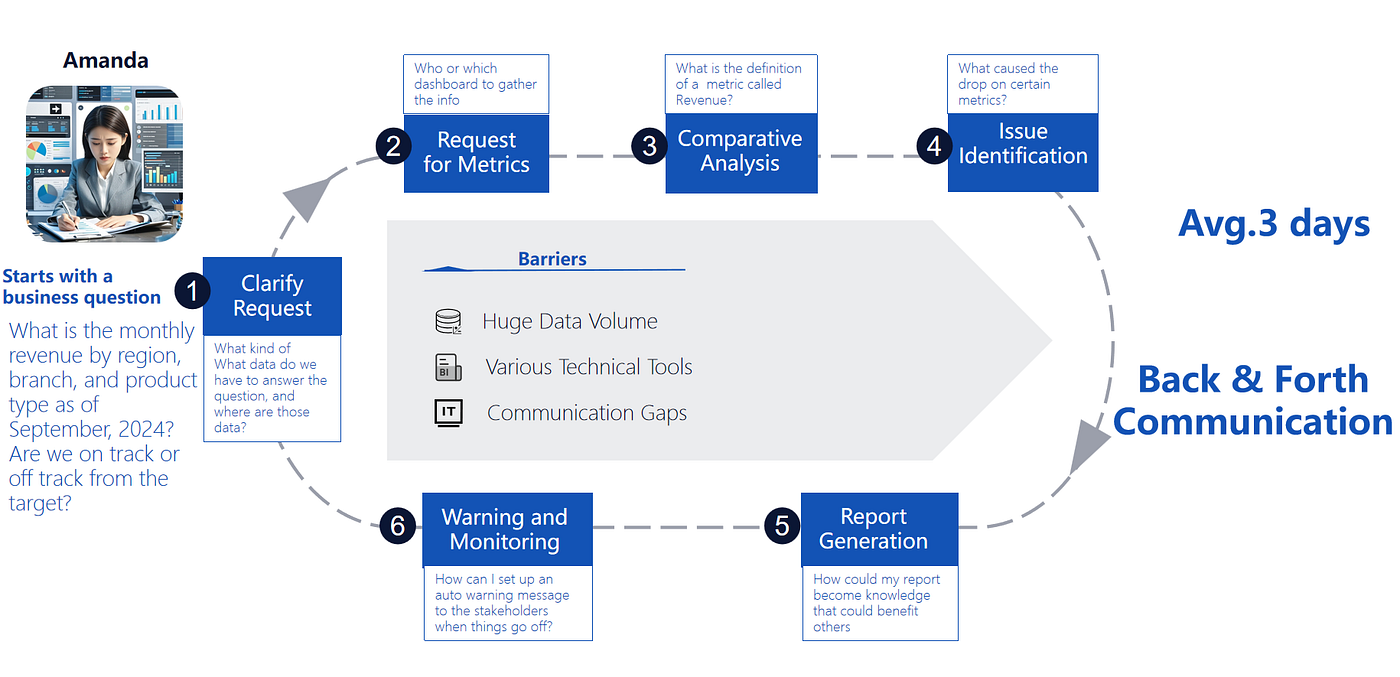

Who Benefits: The “Amanda” Problem

Consider a manager asking:

> “What is monthly revenue by region, branch, and product type as of September 2024? Are we on or off track vs target?”

That “one question” typically becomes a chain of requests:

- Clarify what data exists and where it lives

- Align on metric definitions (what counts as revenue?)

- Investigate anomalies

- Turn results into a shareable report

- Set up monitoring or alerts for the future

Agentic analytics removes the back-and-forth by letting business users ask directly — while the system handles the hard parts behind the scenes.

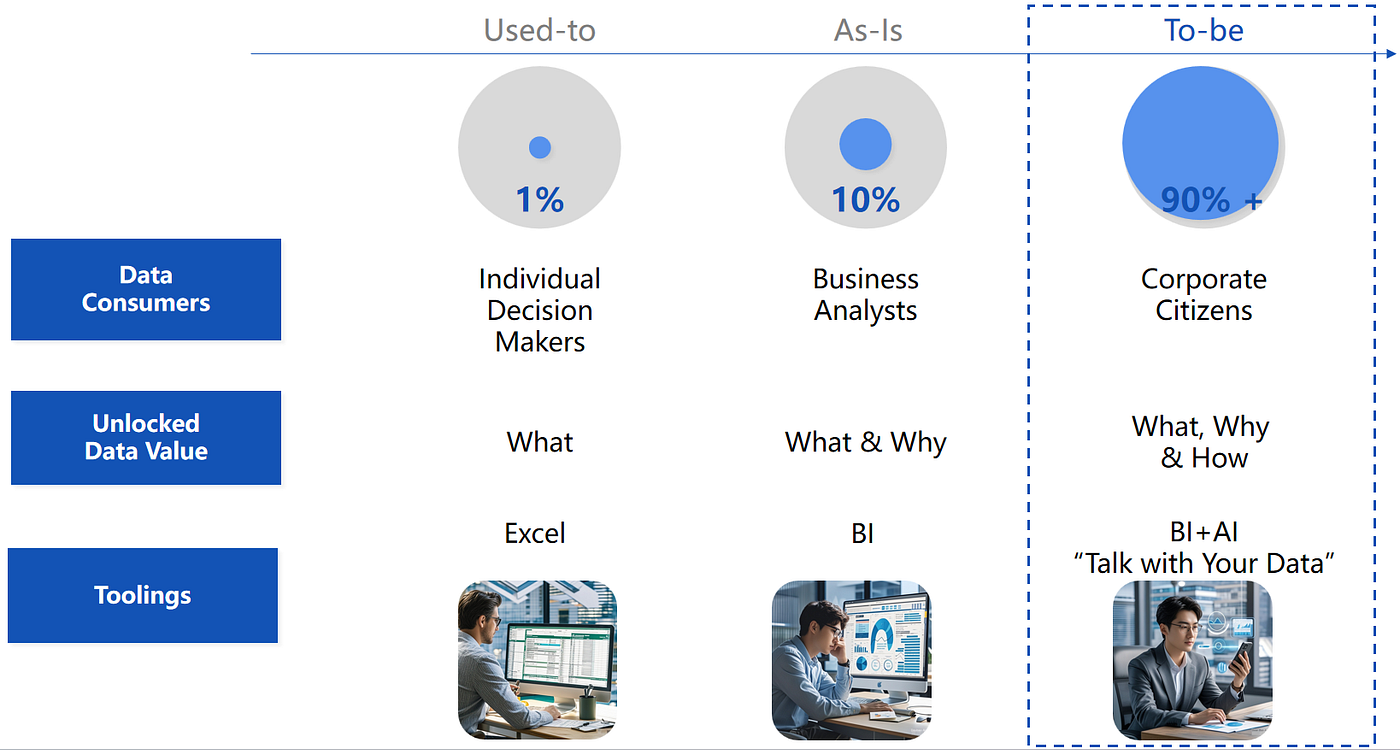

The Three Eras of Data Analysis

It helps to view agentic analytics as an evolution, not a replacement.

Era 1: The Excel Age

- Data consumers: ~1%

- Value unlocked: “What” (basic descriptive)

- Tooling: spreadsheets and manual workflows

Era 2: The BI Age

- Data consumers: ~10%

- Value unlocked: “What & Why” (descriptive + diagnostic)

- Tooling: dashboards, filters, and visualization layers

Era 3: The AI Conversation Age

- Data consumers: 90%+

- Value unlocked: “What, Why & How” (including prescriptive)

- Tooling: BI + AI, where analysis is guided by conversation

The promise is real — but reliability is the barrier.

Why LLMs Alone Don’t Deliver Reliable Enterprise Analytics

In enterprise environments, direct “natural language → SQL” fails in predictable ways:

- Missing business context: “Revenue” can mean five different things.

- Opaque schemas: Column names rarely explain themselves.

- Join complexity: Warehouses have hundreds of tables with brittle join logic.

- Embedded rules: Transformations and exclusions live in code, not in the database names.

This is why organizations get confident-looking, wrong answers.

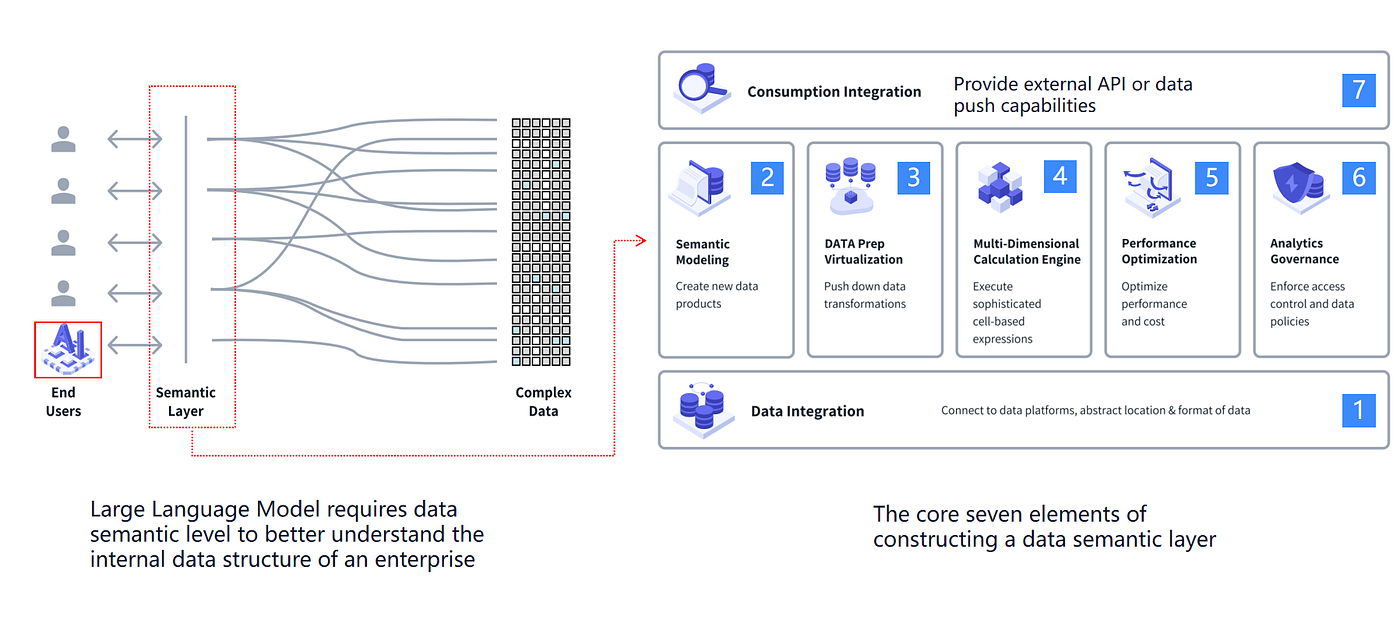

The Semantic Layer: The Foundation of Reliability

A semantic layer sits between end users (and AI) and raw data systems, mapping business concepts to technical implementations.

It turns:

- “Revenue” into a governed metric definition

- “Active customer” into a consistent rule

- “Region” into the correct dimension mapping

The Core Elements of a Strong Semantic Layer

While implementations vary, most enterprise semantic layers need:

- Data integration across platforms

- Semantic modeling (metrics + dimensions that match business concepts)

- Transformation virtualization / pushdown

- A calculation engine for consistent metric logic

- Performance optimization

- Governance (RBAC, policies, PII handling)

- Consumption integration (APIs, BI tools, embedded use cases)

Ontology + Semantic Layer: Making Meaning Machine-Readable

In data systems, an ontology defines:

- Entities (customers, orders, transactions)

- Attributes (date, amount, status)

- Relationships (orders contain products)

- Rules (constraints and logic)

A semantic layer is often the most practical way to implement that ontology for analytics.

When metrics and dimensions are codified, agents can do semantic reasoning:

- disambiguate terms (“gross vs net revenue”)

- infer groupings (“premium customers”)

- keep definitions consistent across teams

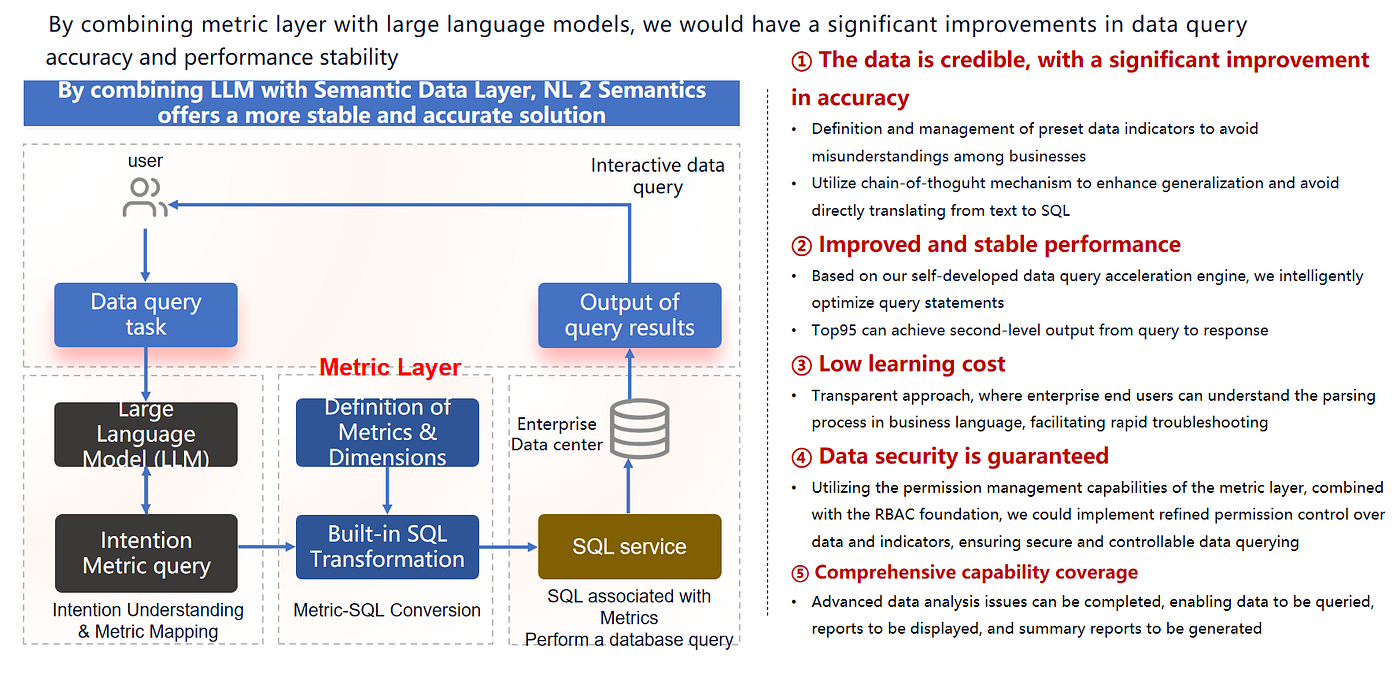

Why Agent Architecture + Semantic Layer Beats Pure LLM-to-SQL

Instead of asking an LLM to generate raw SQL, the better flow is:

- Interpret intent

- Map to governed metrics/dimensions in the semantic layer

- Use validated transformations (metric layer SQL)

- Execute through a controlled query service

- Return results that are explorable via follow-ups

Common Failure Modes This Avoids

- Schema hallucinations (tables that don’t exist)

- Incorrect joins (especially multi-hop and self-referential)

- Business logic drift (wrong filters, missing exclusions)

- Query performance disasters (full-table scans)

- Security blind spots (permissions, PII exposure)

Why It Works Better in Practice

The combination provides:

- Credibility: shared metric definitions reduce cross-team debates

- Stable performance: optimized, reusable query plans

- Lower learning cost: users can see how intent mapped to metrics

- Security: RBAC and governance are enforced at the semantic layer

- End-to-end workflows: query → visualize → summarize → share → monitor

Practical Guidance for Teams Adopting Agentic Analytics

If you’re building (or buying) an agentic analytics platform, start here:

1) Invest in the semantic layer first

Define metrics and dimensions with business stakeholders. AI can’t fix undefined meaning.

2) Prefer true agents over “chat with your data” wrappers

Multi-step planning, validation, and governed execution are not optional at enterprise scale.

3) Plan for continuous iteration

Semantic definitions evolve as your business changes. Treat them as products.

4) Measure outcomes that matter

- Does the result match what a good analyst would produce?

- How much did cycle time drop?

- How many users became self-serve?

- How many analyst “explain this dashboard” pings disappeared?

Conclusion: Data Democratization Is Finally Practical

The goal isn’t to replace analysts. It’s to extend their impact:

- Analysts codify definitions and governance

- Agents make those definitions accessible to everyone

When business users can ask and iterate safely — with the semantic layer keeping answers grounded — data stops being a bottleneck and starts being a competitive advantage.

Key Takeaways

- Direct LLM-to-SQL breaks on schemas, joins, business rules, performance, and security.

- A semantic layer provides the meaning, governance, and consistency AI needs.

- Agent architecture turns questions into multi-step, validated analysis.

- Together, they enable reliable AI business intelligence for the 90%, not just the 10%.